HEAT is dedicated to providing customers with proven cloud solutions that make their businesses more efficient, compliant, and secure.

The company empowers IT, HR, Facilities, Customer Service, and other enterprise functions to simplify and automate their business processes to improve service quality, while managing and securing endpoints to detect and protect against threats to business continuity.

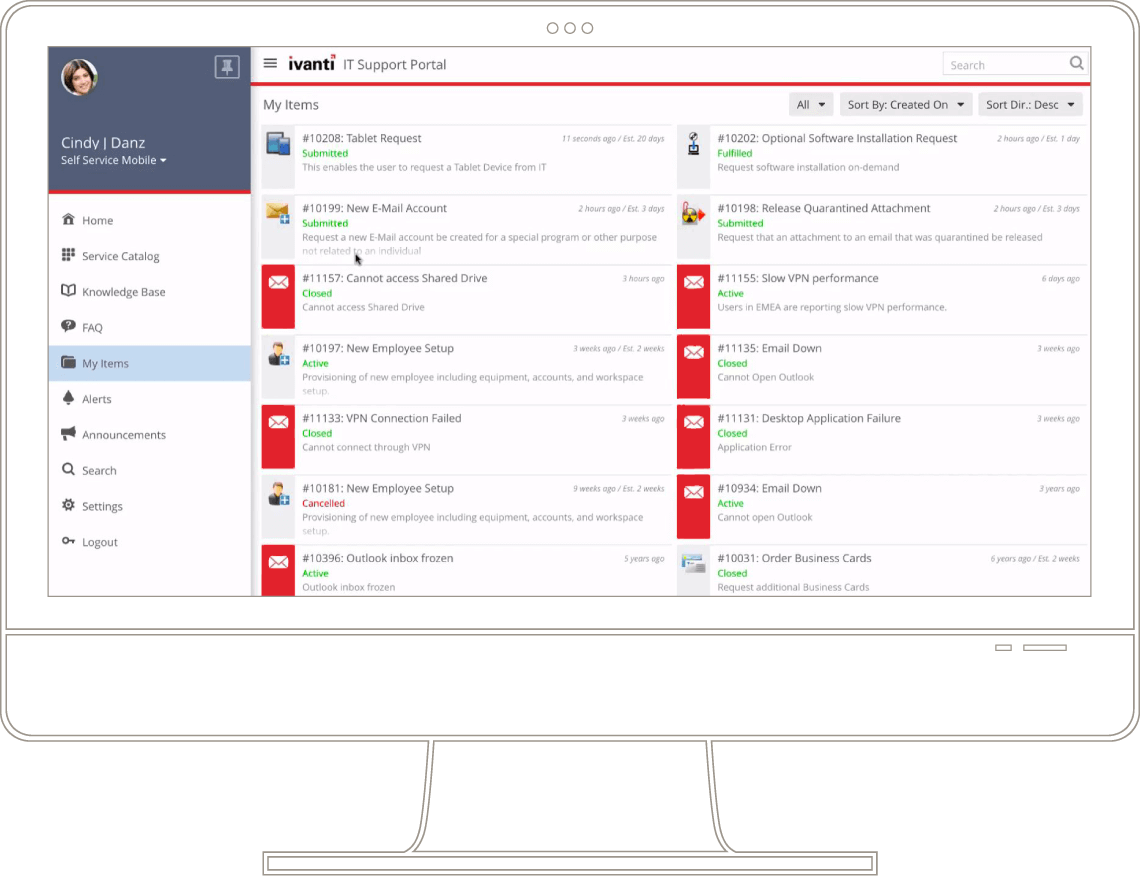

In January 2017, as part of the transaction by Clearlake Capital to acquire LANDESK, Clearlake contributed HEAT Software, its portfolio company, to the new platform investment in LANDESK. As a result, a new company was established under the name Ivanti.